26 Startups Defeat Giant AI – The ‘Small Model’ Becomes a Weapon for SMEs in a Reversal of Fortune

Related Articles

OpenAI has thousands of engineers. Arcee has only 26. Yet, here’s why they can win.

“AI belongs to large corporations” – isn’t that what you think?

OpenAI employs around 3,000 people, and Google’s AI division has even more. They train massive models with hundreds of billions of parameters using billions of dollars in funding. This is a realm that even mid-sized companies cannot enter, let alone small businesses.

However, now a startup with just 26 people is starting to win against these giants in specific domains.

The company is Arcee, based in the United States. Their open-source language model has outperformed major models on certain benchmarks, and the operational costs are a fraction of those of the massive models.

This reversal structure of “small models defeating large models” holds significant implications for small and medium-sized enterprises (SMEs).

Why are “smaller” models stronger?

It may seem counterintuitive, but bigger AI models are not always better.

Massive models (estimated at 1.8 trillion parameters, like GPT-4) boast versatility, claiming they can do “anything reasonably well.” However, this versatility also means they are not optimized for specific tasks.

Arcee’s approach is the opposite. They base their models on small to medium-sized frameworks and apply fine-tuning (additional training) specific to particular industries or tasks. By sacrificing versatility, they achieve superior accuracy in targeted areas compared to large models.

To illustrate this with a cooking analogy: a massive model is like a central kitchen of a large chain that can “make anything.” In contrast, a specialized small model is like a local sushi restaurant that has mastered sushi. In terms of taste, the latter wins.

The cost advantage of “small models” in numbers

The cost difference is overwhelming.

- API usage cost for massive models: Approximately $30 to $60 per 1 million tokens for GPT-4 class.

- Self-hosting cost for small specialized models: Around $100 to $500 per month for equivalent processing (when using cloud GPU instances).

This means that once monthly processing exceeds a certain threshold, running a small model in-house becomes significantly cheaper.

Even more importantly is “speed.” Models with fewer parameters are faster in inference (the process of generating actual responses). While a massive model takes 2 to 3 seconds for a single response, a small model can return an answer in 0.3 seconds. In tasks that require real-time responses—such as customer service chatbots or inventory management systems—this speed difference translates directly into user experience.

Evidence of reversal from China – The significance of the 754B model

On the other hand, China’s AI lab Z.ai (智谱AI) has announced the GLM-5.1, a large model with 754 billion parameters, but what’s noteworthy is its licensing. It is under the MIT license, meaning it is completely open-source and free for commercial use.

This signifies that “we have entered an era where even massive models can be obtained as open-source.”

Models from OpenAI and Anthropic can only be accessed via API, and their pricing is a black box. Even if prices rise, the switching costs are high. However, with open-source models, they can be run on in-house servers. Data does not leave the premises, and costs can be controlled.

Running a 754B class model in-house requires substantial GPU resources, but by distilling it (a technique for transferring knowledge from a large model to a smaller one) to around 70B to 130B parameters, it can be operated at a realistic cost. Companies like Arcee excel in this “distillation + specialization” technology.

What changes for SMEs?

This reversal structure brings three changes for SMEs.

1. Dramatic reduction in “AI usage fees”

Companies that were paying hundreds of thousands of yen monthly for large model APIs could potentially reduce this to tens of thousands of yen with self-hosted small specialized models. This could lead to annual savings of several million yen, significantly impacting the profitability of SMEs.

2. The reality of “company-specific AI”

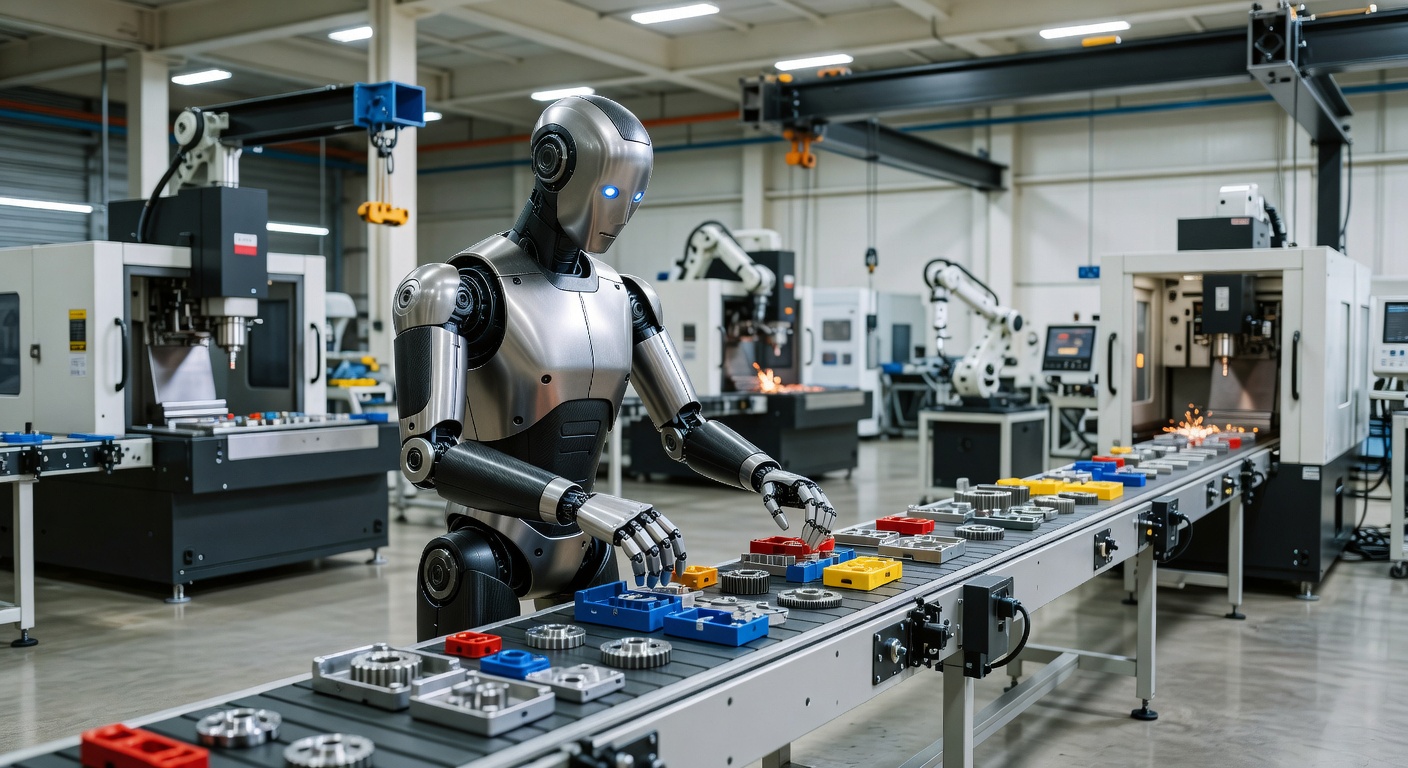

Small models are easier to fine-tune with a company’s own data. For manufacturing, this could be image data for quality inspections; for retail, customer purchase histories; and for construction, past estimation data. Feeding these into the model allows for the creation of a “company-specific AI.” While large corporations use OpenAI’s general models, SMEs could run operations with 99% accuracy using their specialized models—this reversal could happen.

3. Data remains in-house

The biggest concern for SMEs using ChatGPT in their operations is that “data is sent externally.” Customer information, estimate amounts, contract details with partners—many companies have strict security policies against sending this data to OpenAI’s servers. A small model running on in-house servers resolves this issue.

So, what should SMEs do now?

“I understand that small models are good, but we don’t have AI engineers” – this is a natural reaction.

Here are three practical steps:

① Start by “experimenting” with open-source models

On the Hugging Face platform, thousands of open-source models, including Arcee, can be tried for free. Pose questions related to your business and experience how usable they are. Time required: 30 minutes. Cost: $0.

② Look for support services for specialized model implementation

There’s no need to train models in-house. More companies like Arcee are emerging to “support the construction of specialized models.” In Japan, services that handle fine-tuning for SMEs are beginning to appear, with costs ranging from several hundred thousand to a few million yen—just a fraction of what large consulting firms charge.

③ Decide on “what to specialize in”

This is the most important step. AI models do not perform well when they are in a “can do anything” state. Identify the tasks in your business that have the highest volume and are most patterned. Focus on specializing in those areas.

“Small” does not mean “weak”

A startup with 26 people defeats giant corporations with thousands of employees. Small models triumph over large models.

This structure mirrors how SMEs can approach their battles.

They cannot win against large corporations in an all-out war. However, by narrowing their focus to their areas of expertise and leveraging specialized AI as a weapon, they can exploit the gaps where large companies are using general tools.

Being “small” is not a handicap in the AI era. Rather, it becomes a powerful weapon of specialization and agility. What Arcee has proven is exactly that.

JA

JA EN

EN